AI Walks Into a Bank. The Compliance Officer Doesn't Flinch.

That's not the setup to a joke. That's the news.

That's not the setup to a joke. That's the news.

For two years, agentic AI has been the technology equivalent of a vendor who shows up to your credit committee meeting in flip-flops. Brilliant, maybe. Fundable, perhaps. But not someone you let near a SAR filing.

Banks did not stay on the sidelines because they lacked imagination. They stayed because every AI demo ended the same way: "And then a human reviews it." Which is fine, until you realize the human is already buried in work, the agent just created ten new items for their queue, and your examiner is asking what your AI governance policy looks like in writing.

Banks were not waiting for another AI breakthrough. They were not looking for more theater from Silicon Valley. They were waiting for someone to explain how agentic AI could survive a compliance exam.

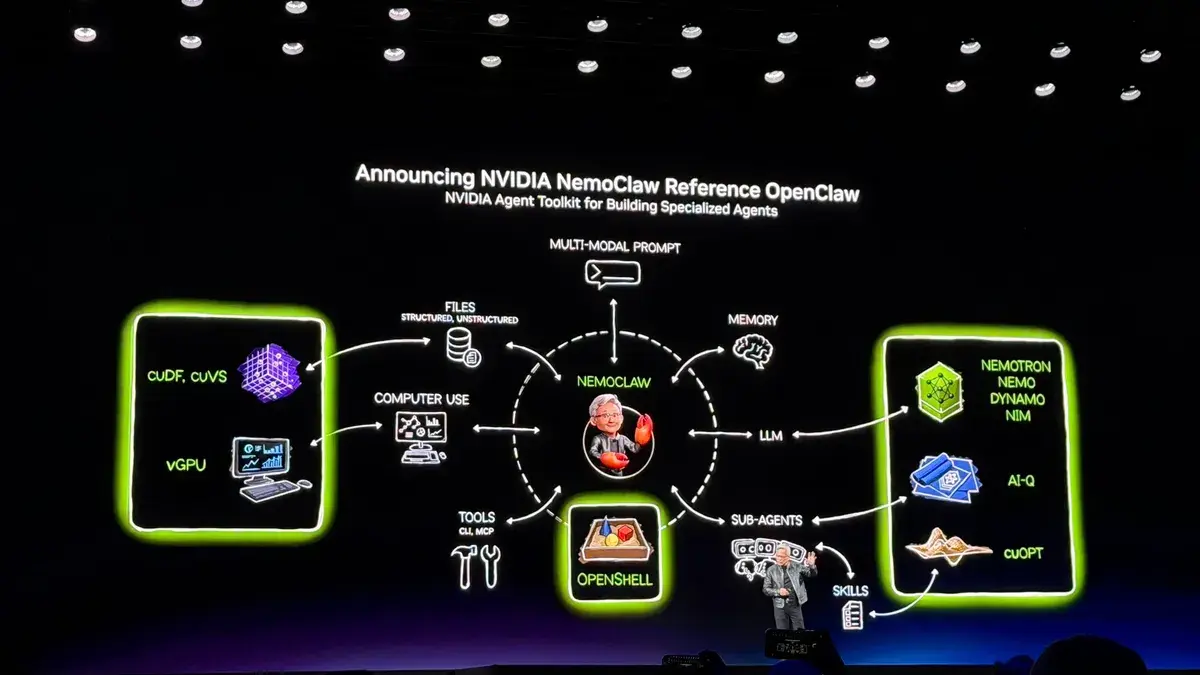

NemoClaw is not a compliance solution. It is not a regulatory filing. It is not magic. What it is, finally, is a control story. And that is the one thing banks have been waiting for someone to tell.

What Changed And What Didn't

Let's be precise, because precision is what banks require.

OpenClaw without a control layer was interesting without being operational. NemoClaw changes that calculus by introducing policy-aware boundaries around what agents can access, what they can do, and what gets logged. In Amberoon's Guardrail AI framework, we describe this as the critical second aspect of responsible AI deployment: hard deterministic boundaries placed around probabilistic AI outputs. NemoClaw is NVIDIA's version of that boundary layer at the infrastructure level.

What it does not do is replace the governance architecture that banks must build on top of it. Governance still drives process. Process still drives technology. Not the other way around. That principle does not change because NVIDIA shipped a new runtime.

Banks still own the compliance logic. NemoClaw gives them a better Agentic AI foundation to build it on.

Where the Opportunity Lives

The right framing is not autonomous AI decision-making. It is controlled internal assistance. Agents that work within defined policy and data boundaries to support human judgment, not replace it. Here is where AI starts to matter for community banks:

| Banking Use Case | What NemoClaw May Enable | What the Bank Still Owns |

|---|---|---|

| BSA / AML Investigations | Internal agent workflows searching policies, SAR procedures, typology libraries, and case notes inside a controlled runtime with privacy guardrails | Human investigator review, SAR confidentiality controls, case-management integration, escalation thresholds |

| Credit Memo Drafting | Copilots retrieving policy excerpts, covenant language, prior committee templates — with PII protections and topic controls | Credit policy mapping, model validation, maker-checker review, human sign-off |

| Treasury / ALCO Research | Internal research agents gathering approved reports in a sandboxed environment with controlled tool access | Approved data feeds, reconciliation controls, limits on autonomous actions, treasury sign-off |

| Compliance Policy Q&A | Bounded assistants that answer from approved policy libraries — not from the open internet | Policy curation, version control, legal review, clear scope limits |

| Board / Management Reporting | First-draft summaries from approved materials inside a secured runtime | Accuracy review, management certification, disclosure controls, board-pack governance |

| Document Triage / Ops Copilots | Always-on assistants for routing, summarizing, and task support with stronger controls than unconstrained agents | Workflow approval logic, audit logs, exception queues, role-based access |

What Banks Should Take From This

NemoClaw is not the finish line. It is the starting gun for a more serious conversation. Expect more companies to make more such announcements in coming months.

For community banks, that conversation has three parts. First: which internal workflows benefit most from bounded AI assistance and which are too sensitive to touch right now? Second: does your institution have the governance architecture to actually deploy an agent framework responsibly, or is technology once again being purchased ahead of policy? Third: who is accountable when the agent gets it wrong?

These are not technology questions. They are governance questions. And the answer to all three starts with the same principle that drives Agile Compliance: governance dictates process, process dictates technology. Never the reverse.

In their 2025 Global Banking Annual Review, McKinsey made the case that the next era of banking will not be won by size. It will be won by precision. Their central argument: banks that deploy AI surgically, focused on workflows where it moves the needle most, will separate from those who pile into it out of fear of missing out. In their most likely scenario, AI pioneers could open up a four-point ROTE advantage over slow movers. Slow movers, meanwhile, face pricing competition, disintermediation, and an uncompetitive cost base. The gap, once opened, does not close.

Community banking has always run on a version of that same precision. You know your borrowers by name. You know your market by instinct. You win not by outspending the big banks but by out-knowing them. AI does not change that equation. It extends it.

The banks that get agentic AI right will not be the ones who deployed it fastest. They will be the ones who deployed it most deliberately with bounded agents in the right workflows, governance architecture that examiners can read, audit trails that boards can sign, and a clear answer to the question McKinsey is asking every bank CEO right now: where exactly does AI create value for your institution, and where does it create risk?

That work belongs to the bank. NemoClaw just made it easier to start.

Amberoon's Lucre, Statum and Manatoko platforms are purpose-built for exactly this moment — governance-first AI for community banks, with the controls, auditability, and regulatory alignment that examiners expect. Contact us to learn how.

Posts by Tag

- big data (41)

- advanced analytics (38)

- business perspective solutions (30)

- predictive analytics (25)

- business insights (24)

- data analytics infrastructure (17)

- analytics (16)

- banking (15)

- fintech (15)

- regulatory compliance (15)

- risk management (15)

- regtech (13)

- machine learning (12)

- quantitative analytics (12)

- BI (11)

- big data visualization presentation (11)

- community banking (11)

- AML (10)

- social media (10)

- AML/BSA (9)

- Big Data Prescriptions (9)

- analytics as a service (9)

- banking regulation (9)

- data scientist (9)

- social media marketing (9)

- Comminity Banks (8)

- financial risk (8)

- innovation (8)

- marketing (8)

- regulation (8)

- Digital ID-Proofing (7)

- data analytics (7)

- money laundering (7)

- AI (6)

- AI led digital banking (6)

- AML/BSA/CTF (6)

- Big Data practicioner (6)

- CIO (6)

- Performance Management (6)

- agile compliance (6)

- banking performance (6)

- digital banking (6)

- visualization (6)

- AML/BSA/CFT (5)

- KYC (5)

- data-as-a-service (5)

- email marketing (5)

- industrial big data (5)

- risk manangement (5)

- self-sovereign identity (5)

- verifiable credential (5)

- Hadoop (4)

- KPI (4)

- MoSoLoCo (4)

- NoSQL (4)

- buying cycle (4)

- identity (4)

- instrumentation (4)

- manatoko (4)

- mathematical models (4)

- sales (4)

- 2015 (3)

- bitcoin (3)

- blockchain (3)

- core banking (3)

- customer analyitcs (3)

- direct marketing (3)

- model validation (3)

- risk managemen (3)

- wearable computing (3)

- zero-knowledge proof (3)

- zkp (3)

- Agile (2)

- Cloud Banking (2)

- FFIEC (2)

- Internet of Things (2)

- IoT (2)

- PPP (2)

- PreReview (2)

- SaaS (2)

- Sales 2.0 (2)

- The Cloud is the Bank (2)

- Wal-Mart (2)

- data sprawl (2)

- digital marketing (2)

- disruptive technologies (2)

- email conversions (2)

- mobile marketing (2)

- new data types (2)

- privacy (2)

- risk (2)

- virtual currency (2)

- 2014 (1)

- 2025 (1)

- 3D printing (1)

- AMLA2020 (1)

- BOI (1)

- DAAS (1)

- Do you Hadoop (1)

- FinCEN_BOI (1)

- Goldman Sachs (1)

- HealthKit (1)

- Joseph Schumpeter (1)

- Manatoko_boir (1)

- NationalPriorites (1)

- PaaS (1)

- Sand Hill IoT 50 (1)

- Spark (1)

- agentic ai (1)

- apple healthcare (1)

- beneficial_owener (1)

- bsa (1)

- cancer immunotherapy (1)

- ccpa (1)

- currency (1)

- erc (1)

- fincen (1)

- fraud (1)

- health app (1)

- healthcare analytics (1)

- modelling (1)

- occam's razor (1)

- outlook (1)

- paycheck protection (1)

- personal computer (1)

- sandbox (1)

Recent Posts

Popular Posts

Amberoon's bank-by-bank analysis reveals a...

That's not the setup to a joke. That's the news.

Source: “When Community Banks Get the Credit: A...